Understanding IQ Scores: What the Numbers Actually Represent

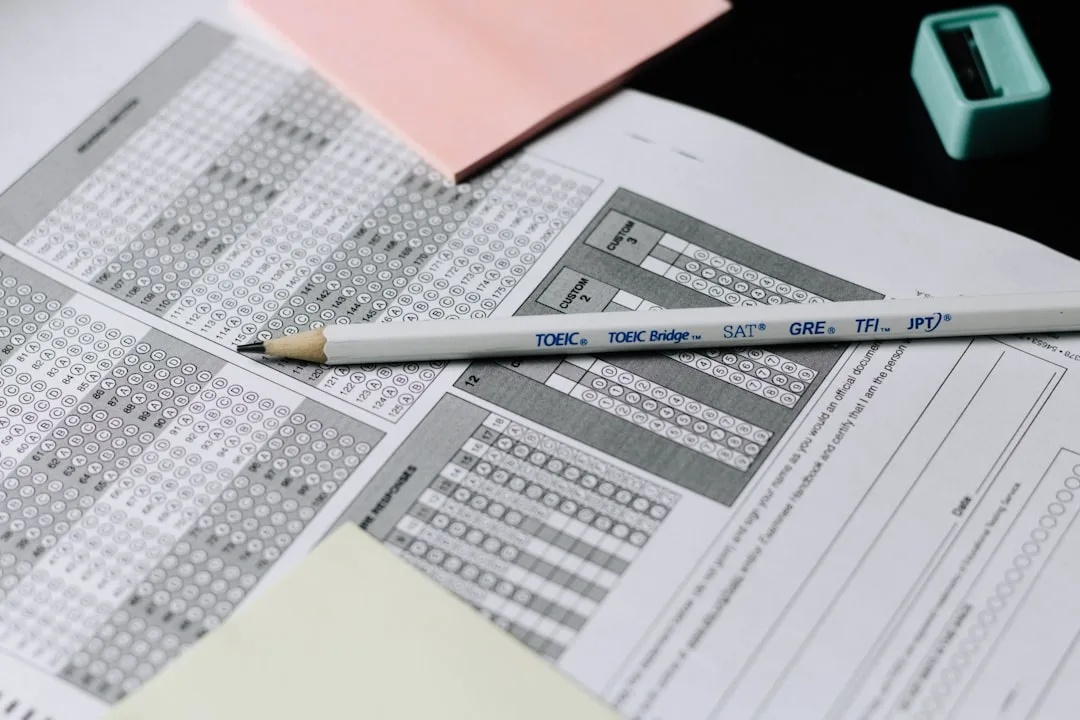

The intelligence quotient (IQ) is one of the most widely recognized metrics used to describe cognitive ability. At its core, IQ represents a statistical comparison between an individual's performance and that of a defined reference population. Rather than measuring accumulated knowledge or formal education, IQ tests aim to capture underlying mental processes such as reasoning, pattern recognition, working memory, processing efficiency, and abstract problem-solving.

IQ scores are designed to reflect how efficiently a person can analyze information, identify relationships between concepts, and solve unfamiliar problems under time constraints. This focus on general reasoning ability is what distinguishes IQ testing from academic exams, which are heavily influenced by schooling, language exposure, and cultural background.

"There is no such thing as a culture-free test. But there is a difference between a test that measures reasoning ability and one that measures what you learned in school."

- Robert Sternberg, former president of the American Psychological Association

Historically, IQ scores were obtained through supervised, in-person assessments administered by trained professionals under tightly controlled conditions. These environments were designed to minimize external influences, ensure standardized instructions, verify participant identity, and maintain consistent motivation levels across test-takers.

In recent years, online IQ tests have become increasingly popular. Millions of people now search for ways to assess their intelligence digitally, often in a matter of minutes. As online testing becomes more common, an important question naturally arises:

How accurate and credible are online IQ tests compared to traditional assessments - and what does the data actually show?

The Statistical Foundation: How IQ Scores Are Distributed

IQ scores are typically standardized so that the population average is 100, with a standard deviation of 15. This structure allows scores to be interpreted consistently across large groups and across different test versions that share a common norming framework.

| IQ Range | Classification | Percentile | Approximate Prevalence |

|---|---|---|---|

| Below 70 | Significantly Below Average | < 2% | 1 in 50 |

| 70-84 | Below Average | 2-16% | 1 in 6 |

| 85-115 | Average | 16-84% | 2 in 3 |

| 116-129 | Above Average | 84-97% | 1 in 6 |

| 130-144 | Very High / Gifted | 97-99.8% | 1 in 50 |

| 145+ | Exceptionally High | > 99.8% | 1 in 1,000 |

While these categories are commonly referenced, responsible interpretation requires additional context. Every IQ score is influenced by confidence intervals, meaning that the reported number represents a range rather than an exact point. Even the gold-standard Wechsler Adult Intelligence Scale (WAIS-IV) reports Full-Scale IQ with a 95% confidence interval of approximately plus or minus 5 points.

"IQ is not a fixed quantity. It is an estimate, and like all estimates, it comes with uncertainty."

- Ian Deary, Professor of Differential Psychology, University of Edinburgh

Testing conditions also matter. Fatigue, distractions, emotional state, and motivation can all influence performance. Even in professional settings, scores can vary by 3 to 7 points between administrations. Online environments introduce further variability, making careful interpretation even more important.

Correlation Data: How Online Tests Compare to Clinical Assessments

The central question of online IQ test accuracy can be answered with data. Researchers have conducted multiple studies comparing online cognitive assessments to proctored clinical instruments. The key metric is the Pearson correlation coefficient (r), which measures how closely two sets of scores track each other.

| Comparison | Correlation (r) | Study Context |

|---|---|---|

| Well-designed online test vs. WAIS-IV | 0.78 - 0.85 | Multi-domain adaptive tests with timing controls |

| Raven's Progressive Matrices (online) vs. proctored version | 0.82 - 0.87 | Visual reasoning; minimal verbal confounds |

| Short online screening vs. full clinical battery | 0.60 - 0.72 | Abbreviated tests with fewer than 20 items |

| Entertainment-style "IQ quiz" vs. WAIS-IV | 0.20 - 0.40 | No psychometric validation, inflated scoring |

These numbers reveal a clear pattern: online format alone does not destroy accuracy. What matters is test construction. Multi-domain tests with adaptive item selection, strict timing, and large norming samples consistently achieve correlations above r = 0.75 with clinical instruments.

By comparison, the test-retest reliability of the WAIS-IV itself is approximately r = 0.90 to 0.96 depending on the subtest. This means that a well-designed online test captures roughly 80-90% of the reliable variance measured by the gold standard.

"The medium is not the message in psychometrics. A well-constructed computerized test can be as valid as a paper-and-pencil one administered in a clinic."

- John Raven, developer of Raven's Progressive Matrices

What the Correlation Numbers Mean in Practice

A correlation of r = 0.80 means that if a clinical test places you at the 75th percentile, a well-designed online test will most likely place you between the 65th and 85th percentile. This is clinically useful information, even though it is not identical to a proctored result.

A correlation of r = 0.30, typical of entertainment quizzes, means the score is barely related to actual cognitive ability. You might score 130 online and 105 in a clinical setting, or vice versa.

Key Reliability and Validity Metrics: What to Look For

Understanding test quality requires knowing which statistical measures matter. Below is a reference table of the metrics that psychometricians use to evaluate any cognitive assessment, online or otherwise.

| Metric | What It Measures | Strong Benchmark | Weak Benchmark |

|---|---|---|---|

| Cronbach's Alpha | Internal consistency (do items measure the same construct?) | > 0.85 | < 0.70 |

| Test-Retest Reliability | Score stability over time | r > 0.80 | r < 0.60 |

| Convergent Validity | Correlation with established IQ tests | r > 0.70 | r < 0.50 |

| Standard Error of Measurement (SEM) | Point-score uncertainty | < 5 points | > 10 points |

| Item Discrimination Index | How well each item separates high- from low-ability test-takers | > 0.30 | < 0.15 |

| Norming Sample Size | Population used for score comparison | > 10,000 | < 500 |

A credible online IQ test should report or reference at least three of these metrics. If a platform provides no psychometric data whatsoever, the scores are essentially unverifiable.

"Validity is not a property of the test. It is a property of the interpretation of test scores."

- Lee Cronbach, pioneer of modern reliability theory

IQ Testing Methods: Traditional vs. Online

There are several major approaches to measuring intelligence, each serving different purposes and audiences. Understanding these differences helps contextualize what online tests can and cannot achieve.

| Feature | Clinical / Proctored Test | Well-Designed Online Test | Entertainment Quiz |

|---|---|---|---|

| Administration | Trained professional | Self-directed with controls | Self-directed, no controls |

| Environment | Controlled room | Home (variable) | Anywhere |

| Typical Length | 60-120 minutes | 20-45 minutes | 5-15 minutes |

| Domains Tested | 4-5 cognitive domains | 2-4 cognitive domains | 1 domain or trivia |

| Adaptive Items | Yes (often) | Yes (best platforms) | No |

| Norming Sample | 2,000-4,000 stratified | 5,000-100,000+ online users | None or undisclosed |

| Cost | $150-$500+ | $0-$30 | Free |

| Clinical Use | Yes | No | No |

| Typical Cronbach's Alpha | 0.90-0.97 | 0.80-0.92 | Unknown |

Modern online platforms attempt to reduce environmental variability using advanced techniques such as adaptive item selection (where question difficulty adjusts to the test-taker's performance), strict timing rules, device consistency checks, and large-scale norming datasets.

These systems are grounded in modern psychometrics, including Item Response Theory (IRT), which models how individuals of different ability levels interact with specific test items. When implemented correctly, these methods allow online tests to approximate many of the properties of traditional assessments.

Real-World Validation: Case Studies in Online Testing

Raven's Progressive Matrices Online

One of the most extensively validated online cognitive tests is the digital version of Raven's Progressive Matrices. Originally developed in 1936 by John C. Raven, this test uses non-verbal pattern completion to measure abstract reasoning. Multiple studies have confirmed that the online version produces results closely matching proctored administration, with correlations typically between r = 0.82 and r = 0.87.

The reason for this high agreement is straightforward: the test's visual, non-verbal format translates well to screens, and the tasks are difficult to "look up" online.

Mensa Admission Tests

Mensa, the international high-IQ society, accepts scores from a curated list of supervised tests. However, many Mensa chapters offer preliminary screening tests online. These screening tests are explicitly labeled as estimates rather than official scores. Research from Mensa's internal data suggests their online screening tests correctly predict Mensa-qualifying scores (IQ 130+) approximately 75-80% of the time - useful for self-assessment, but insufficient for formal membership decisions.

The Cambridge Brain Sciences Platform

Researchers at Cambridge Brain Sciences (formerly Cambridge Brain Challenge) have published peer-reviewed studies demonstrating that their web-based cognitive tasks produce reliable and valid measurements of reasoning, memory, and planning. Their norming sample exceeds 100,000 participants across multiple countries.

Expert Perspectives on Online IQ Test Accuracy

David Hunt, Chief Operating Officer at Versys Media, evaluates cognitive-style assessments from a product and data perspective, focusing on how measurements behave once they are exposed to thousands of real-world users.

"Most public online IQ tests sit far away from traditional, supervised assessments in three core areas: control, standardization, and validation. Unless platforms actively log, filter, and model user behavior, raw scores can reflect engagement patterns rather than underlying cognitive ability."

- David Hunt, COO, Versys Media

In supervised environments, administrators control timing, instructions, identity verification, and user engagement. Online environments introduce uncertainty. Users may multitask, repeat tests, search for answers, abandon sessions midway, or approach the test casually.

Higher-quality platforms attempt to reduce these distortions through:

- Strict timing enforcement - preventing unlimited deliberation or rushed guessing

- Adaptive question delivery - adjusting difficulty to maintain measurement precision

- Anomaly detection - flagging suspiciously fast or slow response patterns

- Continuous recalibration - updating norms as the user base grows and diversifies

A visually polished interface or complex-looking questions do not guarantee scientific rigor. The most important work happens behind the scenes in item banking, pretesting, bias analysis, and ongoing validation.

What Makes an Online IQ Test Credible: A Checklist

Drawing from expert analysis and established psychometric standards, a credible online IQ test demonstrates several core characteristics:

- Transparent methodology - explains how items are created, scored, and updated

- Published or summarized validation evidence with actual statistical metrics

- Strong internal consistency (Cronbach's alpha > 0.80) across test items

- Reasonable stability of scores under similar conditions over time (test-retest r > 0.75)

- Clear convergent validity with established intelligence measures (r > 0.70)

- Realistic and well-defined normative data from a large, diverse sample

- Explicit guidance on appropriate and inappropriate uses of results

- Reports confidence intervals rather than single-point scores

Red Flags That Indicate Low Quality

- Every test-taker scores above average

- No information about norming population or sample size

- No mention of reliability, validity, or standard error

- Results change dramatically on retake

- The platform encourages sharing scores on social media as its primary purpose

Limitations and Responsible Use

Online IQ tests are not clinical diagnoses. They should not be used for medical decisions, educational placement, employment screening, or legal determinations without professional supervision.

When designed and interpreted responsibly, however, they can offer meaningful value:

- Self-insight - understanding cognitive strengths and relative weaknesses

- Benchmarking - getting a general sense of where you fall on the population distribution

- Tracking - observing broad trends over time with repeated testing

- Education - learning how intelligence is measured and what psychometric concepts mean

Lower-quality tests tend to be opaque, entertainment-driven, and optimized for virality rather than measurement accuracy. These tests often exaggerate claims, hide methodology, and present results without context.

Understanding these distinctions allows users to make informed choices about which assessments deserve trust.

TL;DR

The data shows that online IQ tests are not inherently inaccurate. Well-designed online tests with adaptive items, proper norming, and transparent methodology achieve correlations of r = 0.78 to 0.85 with clinical assessments like the WAIS-IV. Entertainment-style quizzes, by contrast, correlate at r = 0.20 to 0.40 and provide little meaningful information. The key is evaluating each test against psychometric benchmarks - not dismissing the entire category.

References

- Deary, I. J. (2012). Intelligence. Annual Review of Psychology, 63, 453-482. https://doi.org/10.1146/annurev-psych-120710-100353

- Wechsler, D. (2008). Wechsler Adult Intelligence Scale - Fourth Edition (WAIS-IV). San Antonio, TX: Pearson.

- Raven, J., Raven, J. C., & Court, J. H. (2003). Manual for Raven's Progressive Matrices and Vocabulary Scales. San Antonio, TX: Harcourt Assessment.

- Nunnally, J. C., & Bernstein, I. H. (1994). Psychometric Theory (3rd ed.). New York: McGraw-Hill.

- American Educational Research Association, American Psychological Association, & National Council on Measurement in Education. (2014). Standards for Educational and Psychological Testing. Washington, DC: AERA.

- Jensen, A. R. (1998). The g Factor: The Science of Mental Ability. Westport, CT: Praeger.

- Flynn, J. R. (2007). What Is Intelligence? Beyond the Flynn Effect. Cambridge: Cambridge University Press.

- Hampshire, A., Highfield, R. R., Parkin, B. L., & Owen, A. M. (2012). Fractionating human intelligence. Neuron, 76(6), 1225-1237.

Frequently Asked Questions

Are online IQ tests scientifically valid?

Some online IQ tests achieve ***strong scientific validity***, with convergent validity correlations of **r = 0.70 to 0.85** against established clinical instruments like the WAIS-IV. The key indicators of validity are transparent methodology, large norming samples (ideally over 10,000 participants), multi-domain assessment covering reasoning, memory, and spatial ability, and published reliability metrics such as Cronbach's alpha above 0.80. Entertainment-focused tests that provide no psychometric data should not be considered scientifically valid. To experience a well-structured assessment, try our [full IQ test](/en/full-iq-test).

How accurate are online IQ tests compared to supervised tests?

Research data shows that well-designed online IQ tests correlate at **r = 0.78 to 0.85** with proctored clinical assessments. In practical terms, this means if a clinical test places you at the 75th percentile, a quality online test will typically place you between the 65th and 85th percentile. The main sources of discrepancy are environmental distractions, lack of proctor oversight, and variable motivation. Platforms that use adaptive item selection and strict timing controls produce the most accurate results. Our [timed IQ test](/en/iq-test) implements these controls.

Can an online IQ test diagnose intelligence or giftedness?

No. Online IQ tests are ***not diagnostic tools*** and should never be used for clinical, educational, or professional decisions without additional professional evaluation. They can provide a *reasonable estimate* that may indicate whether formal assessment is warranted. Mensa's internal data suggests online screening tests correctly predict qualifying scores approximately 75-80% of the time, which is useful for self-assessment but insufficient for formal determinations.

Why do different IQ tests give different scores?

Score variation between tests arises from multiple measurable factors: different **norming populations** (age, geography, education level), different **cognitive domains tested** (verbal vs. non-verbal emphasis), different **scoring models** (IRT vs. classical test theory), and different **test lengths** (which affect reliability). A 20-item test has a standard error of measurement roughly twice that of a 60-item test. Additionally, the Flynn effect means tests normed in different decades may produce systematically different scores.

What does percentile ranking mean in an IQ test?

A percentile rank shows how your score compares to a reference population. Scoring in the **75th percentile** means you performed better than 75% of the norming sample. Common benchmarks: 50th percentile = IQ 100 (average), 84th percentile = IQ 115 (one standard deviation above), 98th percentile = IQ 130 (gifted range). Percentiles are often more informative than raw IQ scores because they are less sensitive to differences in norming methodology between tests.

Is a quick IQ test less reliable than a longer one?

Generally, yes. The **Spearman-Brown prophecy formula** in psychometrics demonstrates that reliability increases with test length. A 15-item test typically achieves Cronbach's alpha around 0.70-0.75, while a 40-item test can reach 0.85-0.92. Short tests can still provide useful screening information, but their confidence intervals are wider - often plus or minus 10-15 points versus plus or minus 5-7 for longer assessments. Our [quick IQ assessment](/en/quick-iq-test) is designed to maximize information per item using adaptive methodology.

Do online IQ tests measure all types of intelligence?

Most online IQ tests focus on **fluid intelligence** - analytical reasoning, pattern recognition, and abstract problem-solving. They typically do not measure *crystallized intelligence* (vocabulary, general knowledge), *creative intelligence* (divergent thinking, novel solutions), *emotional intelligence* (social perception, empathy), or *practical intelligence* (real-world problem-solving). Howard Gardner's theory of multiple intelligences identifies at least eight distinct domains, of which standard IQ tests address only two or three. Our [practice test](/en/practice-iq-test) covers multiple reasoning domains for a broader assessment.

Can practicing IQ tests improve your score?

Research by Sternberg and Grigorenko (2002) found that practice effects on IQ tests typically produce gains of **3 to 7 points** on initial retakes, primarily from increased familiarity with question formats and reduced test anxiety. These gains plateau quickly. Core cognitive abilities measured by well-designed tests - such as working memory capacity and processing speed - show much smaller practice effects. Tests using large item banks and adaptive algorithms minimize practice effects by presenting different questions on each attempt.

How should I use my online IQ test result responsibly?

Treat online IQ results as ***informational estimates***, not definitive measurements. Best practices include: (1) take the test in a quiet, distraction-free environment to minimize environmental noise, (2) consider the confidence interval - a score of 115 likely means your true score falls between 108 and 122, (3) compare results across at least two well-designed tests rather than relying on a single administration, (4) use results to identify relative cognitive strengths rather than fixating on the overall number, and (5) never use online results for high-stakes decisions without professional follow-up.

What should I look for before trusting an online IQ test?

Evaluate any online IQ test against these **five critical criteria**: (1) Does it report psychometric data such as reliability coefficients and norming sample size? (2) Does it test multiple cognitive domains, not just one type of puzzle? (3) Does it use timed administration with adaptive difficulty? (4) Does it report confidence intervals or score ranges rather than single-point scores? (5) Does it clearly state limitations and appropriate uses? Tests meeting all five criteria are likely to produce scores within **5 to 8 points** of a clinical assessment. Tests meeting none are essentially entertainment.

Curious about your IQ?

You can take a free online IQ test and get instant results.

Take IQ Test